By João Pedro Azevedo — Chief Statistician & Deputy Director, UNICEF (Data, Analytics, Planning & Monitoring)

For years, a central criticism of the financing of national data systems was “underutilization.”

Billions were invested in statistical infrastructure — yet many datasets remained difficult to access and operationalize. Navigating institutional barriers, complex metadata structures, and raw files requiring specialized tooling created high transaction costs. Those with technical capacity could transform official statistics into influential research. Others — including policymakers closest to the problems — faced structural barriers to effective use.

That landscape is changing rapidly.

Large Language Models (LLMs) can now interpret data, generate code, produce charts, and draft policy narratives, dramatically lowering the cost of technical mediation. Yet the human in the middle remains essential — no longer primarily as a technical operator, but as a steward of provenance, context, and institutional credibility.

This redefinition of expertise introduces a higher-stakes challenge: LLMs are only as reliable as the data, metadata, and provenance they consume.

If analytical acceleration is no longer constrained by skill, credibility must be constrained by infrastructure.

After fifteen years working on programmatic access to official statistics, I have come to a clear conclusion:

Data acquisition and preparation are not auxiliary tasks. They are methodological acts. And in the age of AI, they must be executable and auditable.

The Real Reproducibility Problem

In the age of AI, reproducibility is no longer only a scientific concern; it is a question of governance and public trust.

The reproducibility crisis is often framed as a methodological failure — insufficient robustness checks, weak disclosure standards, or lack of code sharing. Those issues matter. But many failures occur earlier, upstream, at the moment data enter the analytical workflow.

One part of the solution lies in the disciplined use of official statistics, which are more than simply data. They are institutionally governed measurements — the product of negotiated international standards, methodological judgments, documented revision policies, and accountability frameworks built through sustained human collaboration. They are not infallible; they evolve through revision, scrutiny, and methodological refinement. Their authority derives not from perfection, but from transparent processes and institutional accountability.

Because they are human-led and collectively negotiated, disagreements can be debated, resolved, and codified into improved standards that endure over time. When that governance context is detached from the numbers, what remains is a statistic stripped of the institutional architecture that made it authoritative. In such cases, public debate expands beyond methodological inquiry into broader questions of institutional credibility and trust.

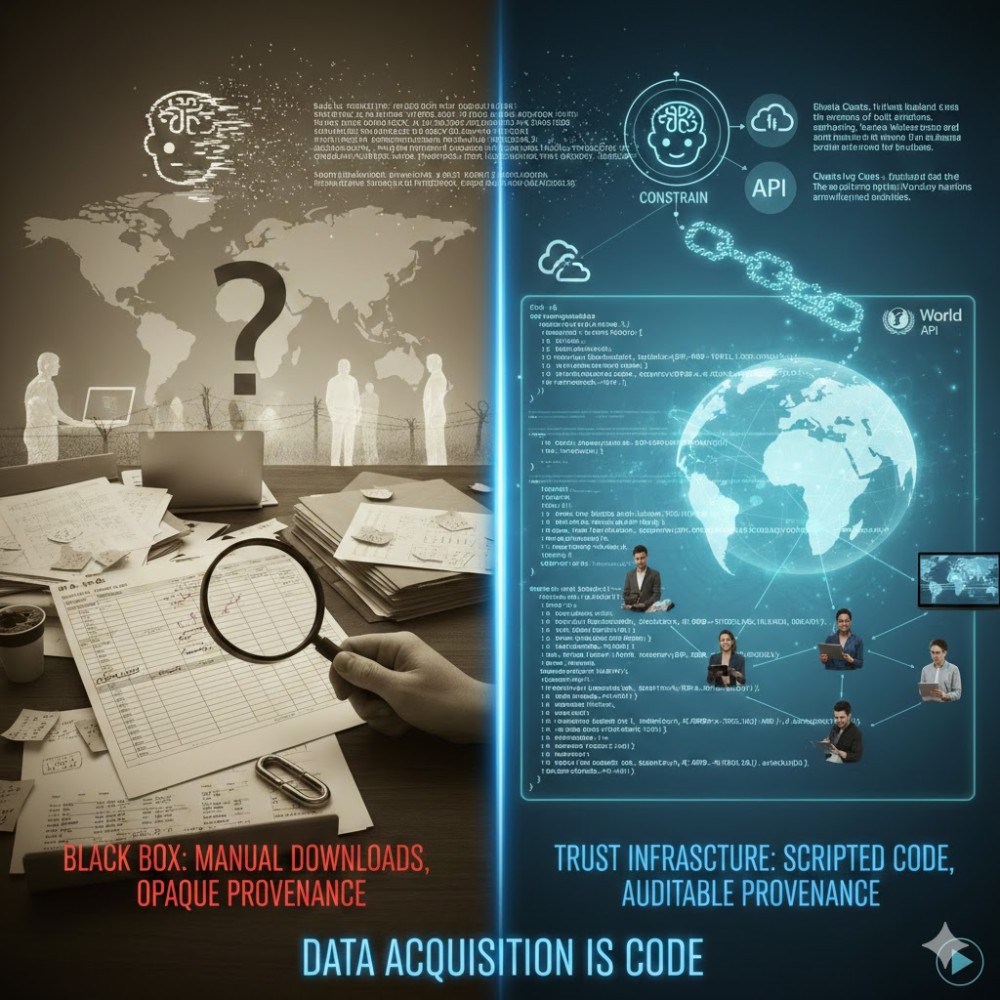

When analysis relies on manual downloads, undocumented filters, renamed files, and silent transformations, the chain of provenance is broken before any model is estimated. A regression may be correctly specified yet rest on an opaque data construction process. Without executable documentation of how indicators were retrieved, filtered, and merged, it becomes impossible to distinguish genuine policy insight from undocumented preprocessing artifacts.

Visual: Concept and design by the author; visual rendering generated by Gemini AI (2026).

Visual: Concept and design by the author; visual rendering generated by Gemini AI (2026).

In an AI-assisted environment, that opacity compounds. Automated systems can generate convincing outputs, but they cannot reconstruct invisible institutional deliberation.

Reproducibility is therefore not only about replicating coefficients. It is about reconstructing the full decision pathway — including the governance context — that produced the dataset.

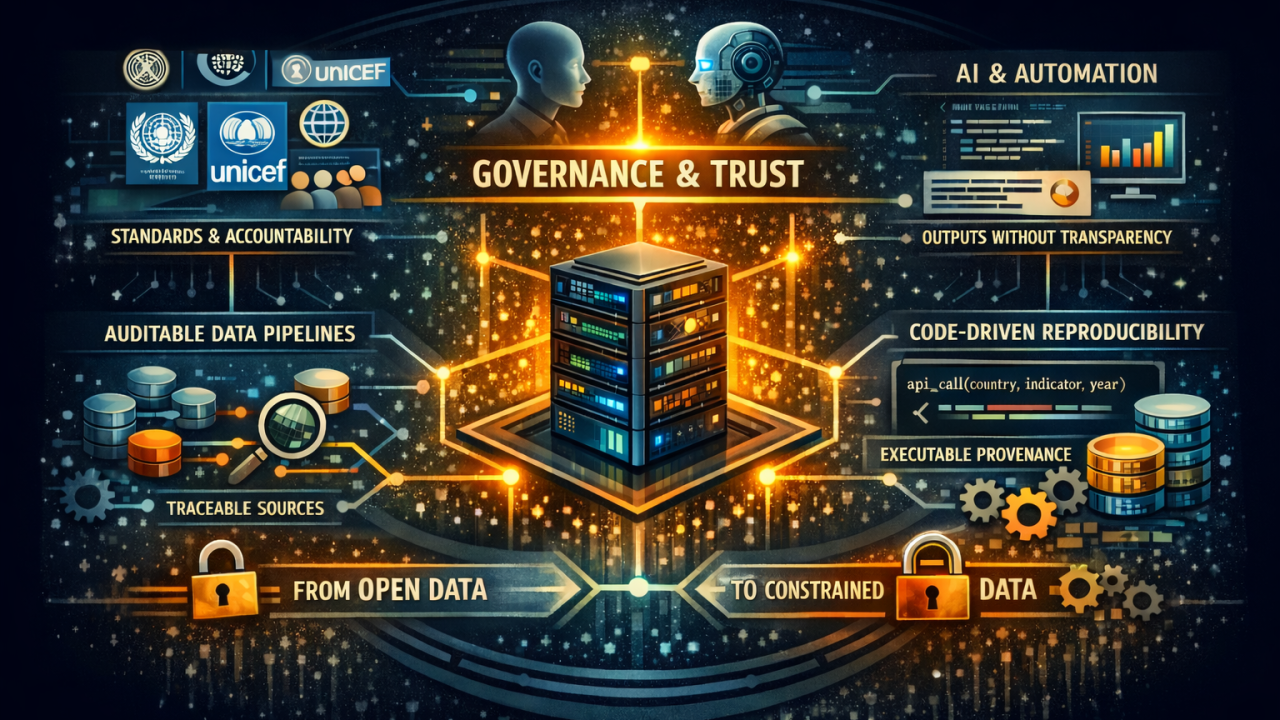

From Open Data to Constrained Data

In an AI-mediated world, openness alone is insufficient.

For high-stakes policy decisions, we need constrained data pipelines. By binding analysis to authoritative APIs and exposing indicator, country, and time selections as executable code, programmatic systems function as constraint mechanisms.

Constraint does not mean restriction; it means discipline — narrowing the space of admissible inputs to those grounded in documented standards.

In practice, this ensures traceability by default, supports acceleration without fabrication, and preserves machine-readable metadata — making provenance enforceable and institutional trust portable.

Visual: Concept and design by the author; visual rendering generated using OpenAI’s DALL·E (2026).

Visual: Concept and design by the author; visual rendering generated using OpenAI’s DALL·E (2026).

Lessons from Fifteen Years of Infrastructure

Programmatic access systems have evolved across languages and institutions, including implementations in R, Python, and Stata.

Three principles, developed through sustained practice, matter most.

Backward compatibility as trust infrastructure. Policy pipelines often run for years. Stable syntax — even as underlying APIs evolve — prevents infrastructure drift from silently breaking historical analysis. Backward compatibility is not convenience; it is institutional continuity encoded in code.

Domain-specific guardrails. Interfaces structured around meaningful analytical concepts — indicators, countries, topics — reduce ambiguity and lower the risk of misinterpretation, especially in AI-mediated workflows. Guardrails are safeguards for institutional meaning.

Self-documenting datasets. Embedding provenance directly into datasets — including exact query syntax, source database, and metadata — ensures that context persists even when files are shared, archived, or processed by automated systems. Provenance becomes portable.

Auditable Discovery

Recent metadata architectures bring code and documentation closer together by making catalog exploration executable. Indicator selection itself becomes auditable.

Instead of browsing portals and manually copying codes, users can script their discovery process. The moment an indicator is chosen for analysis, that decision is documented.

Reproducibility is extended upstream — to the moment of choice.

The Governance Implication

The old criticism was that data infrastructure was underutilized. Today, the risk is the opposite: data may be over-utilized without discipline.

We are moving from a world where humans search for statistics to one where machines answer on their behalf.

In that world, credibility depends less on analytical sophistication and more on infrastructure that preserves the institutional architecture embedded in official statistics.

Reproducibility must move from aspiration to default behavior. As analysis accelerates, constraints must strengthen so that high-stakes policy decisions remain grounded in verifiable evidence — even when machines stand between the data and the decision-maker.